Grasping Big O Notation: Your Algorithm's Speedometer

The Basics of Algorithmic Time Complexity

When writing or utilizing algorithms in real-life applications, we need to understand their performance characteristics - how fast or slow they can be. This is especially relevant when algorithms become part of specialized and critical systems like the code that determines the landing spot of a SpaceX rocket preparing to land on Mars.

Think about a developer named Fred who is in charge of that code. He has to choose between two different algorithms - a simple search or binary search.

Both algorithms serve the same purpose, but they grow at different rates. Binary search is faster, a desirable attribute given that Fred has merely 10 seconds to calculate the landing spot. On the other hand, implementing simple search is much easier, representing a lower risk of bugs - rather crucial because nobody wants a bug when landing a spaceship!

The Importance of Time Complexity

To make a wise decision, Fred decides to measure both algorithms against a list of 100 elements. He assumes that each element that he searches in the list will take 1ms.

The simple search algorithm will need 100ms (because it takes O(n) time, where n is the number of elements), while the binary search algorithm will only need 7ms (given its O(log n) time complexity). But what happens if the size of the list increases significantly? To put it into perspective, if the list had around 1 billion elements, the binary search would take ~30ms, while a simple search would take around 11 (yes, eleven) days.

This hypothetical scenario showcases how the execution time of different algorithms can multiply at varying rates, demonstrating the importance of time complexity analysis. It's not about only the time an algorithm takes, but how it scales with input size. And that's where Big O Notation comes into play.

| # of elements | Simple Search | Binary Search |

|---|---|---|

| 100 | 100ms | 7ms |

| 10,000 | 10 seconds | 14ms |

| 1,000,000,000 | 11 days | 32ms |

Unpacking Big O Notation

Big O Notation is a mathematical notation that represents the upper bound - the worst-case scenario - in terms of time complexity for an algorithm. To take a few examples:

- Binary search has a time complexity of

O(log n), also known as Logarithmic Time. - Simple search scales linearly, with a time complexity of

O(n), also known as Linear Time. - Quicksort, a fast sorting algorithm, has a time complexity of

O(n * log n). - Selection sort, a slower sorting algorithm, scales as

O(n²), referred to as Quadratic Time. - A notoriously slow algorithm is the solution to the Traveling Salesman Problem, which has a time complexity of

O(n!), or Factorial Time.

Let's dive deeper into the Traveling Salesman Problem, as it's a famous example of an algorithm with frighteningly high time complexity.

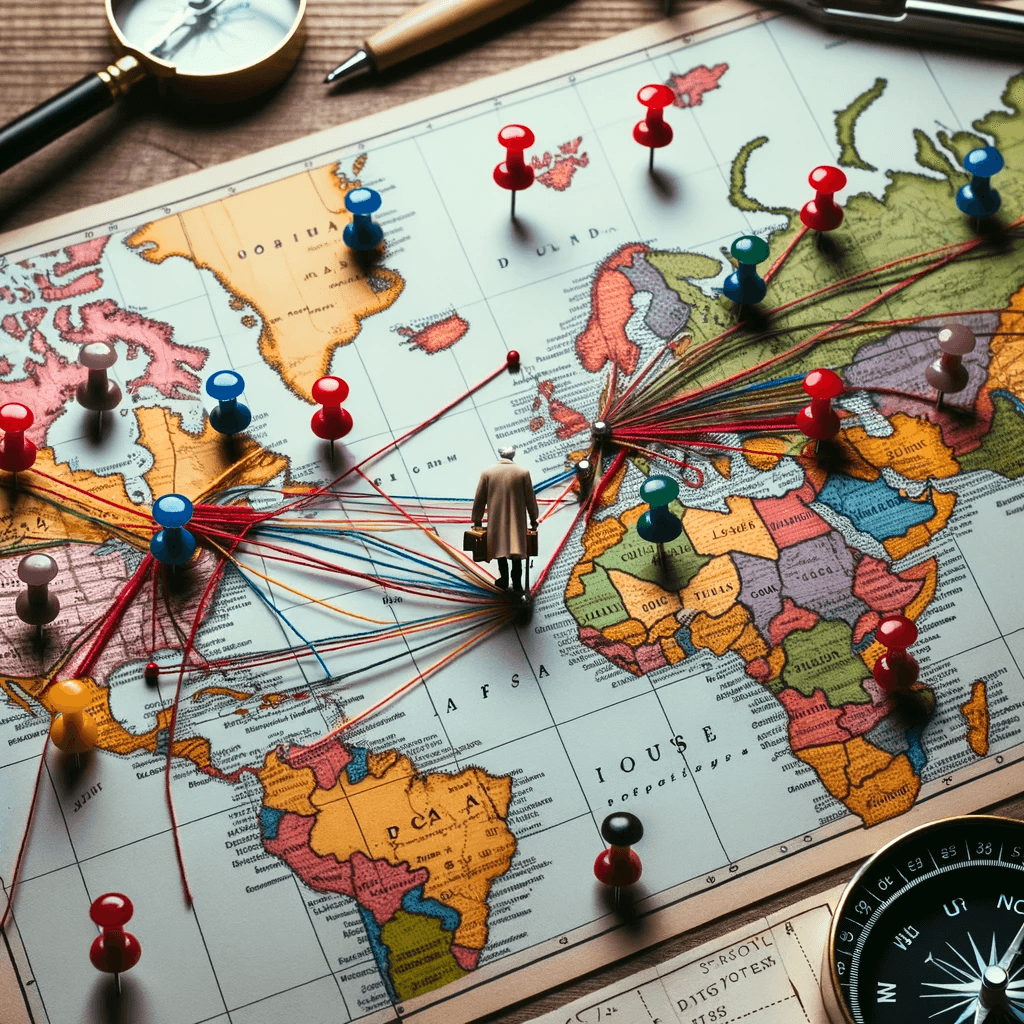

An Overview of the Traveling Salesman Problem

The Traveling Salesman Problem is a combinational optimization problem. It concerns a salesman who needs to travel to multiple cities, visit each one just once, and return to the original point. The aim is to find the shortest possible route.

Here you can see how the growth of this algorithm scales factorially with the number of cities:

| Cities | Operations |

|---|---|

| 6 | 720 |

| 7 | 5,040 |

| 8 | 40,320 |

| ... | ... |

| 15 | 1,307,674,368,000 |

| ... | ... |

| 30 | 265,252,859,812,191,058,636,308,480,000,000 |

In summary, for n items, it takes n! (factorial of n) operations to reach a result. So, that's O(n!) time complexity or factorial time.

Time complexity analysis and Big O Notation are crucial for designing efficient algorithms and systems. Whether writing a function to sort a small list or coding rocket landing software, understanding how your program's running time scales with the size of the input might make the difference between code that runs in milliseconds and code that runs for days.

Understanding Space Complexity

Space complexity is a term used in the study of algorithms to describe the amount of memory (space) an algorithm requires to execute. Understanding space complexity is as important as knowing time complexity, as it helps you consider the efficiency of your algorithm from a different angle.

Often, a trade-off exists between time and space complexity. Sometimes, by using more space (i.e., storing pre-computed values), you can decrease the time complexity of your algorithm (i.e., by reusing these stored values instead of re-calculating them). This is commonly referred to as a space-time tradeoff.

Let's break this down through an example related to social networks. Suppose you construct an algorithm to find whether two users are friends.

One approach would be to run through all connections of user A, and for each connection, check if it’s user B (simple linear search). This approach involves low space complexity (you're not storing much extra data), but the time complexity is O(n), where n is the number of friends of user A.

Another approach would be to utilize a data structure known as a hash set for storing all friends of user A. So, when you need to check if user B is a friend of A, you can look it up in the set directly. This way, the time complexity improves to O(1), but you've increased the space complexity because you store extra data in the hash set.

Big O Notation in Real-World Applications

In the real world, understanding Big O notation has a massive influence on how solutions are developed for large-scale systems. Here are a few examples:

Search Engines

In search engines like Google, data structures known as inverted indices are used to store and search for information. These indices use a sorted list of keywords, each associated with a list of documents (webpages) containing the keyword. Searching for web pages related to a keyword thus becomes a matter of searching for the keyword in the index – a task well-suited for binary search, which has a performance of O(log n). This is critical when dealing with the enormous number of keywords and web pages indexed by a search engine.

Streaming Services

Big O Notation plays a significant role in how services like Netflix make recommendations. They use algorithms that must be able to quickly analyze large amounts of data (i.e., previously watched movies, viewer ratings, and preferences) and make recommendations, requiring algorithms that scale well with an increase in data size. A poorly designed algorithm (with, say, quadratic time complexity) could lead to slow recommendations, negatively impacting user experience.

Database Query Optimization

Databases use various algorithms and data structures to store and retrieve data efficiently, and Big O notation describes the performance of these operations. For instance, efficient databases make use of B-Trees for indexing data due to its logarithmic time complexity which ensures that operations like insertion, deletion and search can be performed rapidly even on large datasets.

Last Word on Big O Notation

Understanding Big O notation ranks among the key steps in becoming a competent developer. Whether you're writing simple code to sort a list, or working on complex, large-scale applications dealing with billions of data points, an awareness of time and space complexity helps you choose the right algorithms, data structures, and design decisions.

Big O Notation provides a lens through which you can see potential bottlenecks in your programs and empower yourself to write code that runs efficiently, irrespective of your application's scale. It helps you comprehend the trade-offs between time and space and optimize according to the specific needs of your project.

In real-world applications ranging from search engines to streaming services and database design, we see the power of a grasp on Big O Notation: faster, more efficient systems that can handle increasing loads of data.

Happy coding, and remember - the quest for efficiency is a developer's journey with no end, just like the unending learning and growth we get to experience in this fascinating field.

Until next time, keep exploring, keep coding, and most importantly, keep optimizing!